AWS Lambda, App Runner, and Batch in CMDB: Why Serverless Assets Need Configuration Management Too

#TLDR

Serverless does not mean infrastructure-free. AWS Lambda functions, App Runner services, and Batch compute environments are configuration items with runtime versions, dependency relationships, and change histories. A Lambda function update can silently break the application that calls it. Without CMDB coverage for serverless assets, change management has no visibility into serverless-sourced incidents. This article covers how each service type maps to CMDB CIs, why dependency mapping is the core value, and how Virima 6.1.1 brings serverless assets into your configuration model.

The Serverless Paradox

“Serverless” is one of the most successful marketing terms in cloud computing, and one of the most misleading. AWS Lambda functions run on servers — AWS-managed servers that you never see, never patch, and never configure. But “no servers to manage” does not mean “no configuration items to manage.”

Every Lambda function has a name, a runtime version, a memory allocation, a timeout setting, a set of triggers, and a set of downstream dependencies. Every one of those attributes is a configuration attribute. Any one of them can change. Any change can produce an incident. And any incident tied to a Lambda function update will be harder to resolve if your CMDB has no record that the function exists, let alone what changed.

The serverless paradox: the operational simplicity of serverless compute at the infrastructure level creates new complexity at the configuration management level. Because serverless removes the server — the traditional anchor of CMDB discovery — it also removes the natural discovery path. Most CMDB tools discover servers and then find software on them. There is no server to find for a Lambda function.

This is the CMDB gap that serverless creates. And it is growing fast. AWS Lambda processes trillions of function executions per month. Enterprise IT teams run hundreds to thousands of Lambda functions supporting production applications. The probability that any given production incident involves a Lambda function is high and rising.

AWS Lambda as a Configuration Item

A Lambda function is a fully valid configuration item. It has identity, configuration attributes, relationships, and a change history. What makes a Lambda a CI:

Identity Attributes

- Function name — the stable identifier; names do not change after creation

- Function ARN — the globally unique resource identifier across AWS

- AWS account and region — location context for multi-account environments

Configuration Attributes

These are the attributes that change management and incident management care about:

- Runtime — the language and version (e.g.,

python3.12,nodejs22.x,java21). AWS deprecates older runtimes and applies forced upgrades. A runtime change is a change event. - Memory allocation — from 128 MB to 10,240 MB; affects performance and cost

- Timeout — maximum execution duration (1 second to 15 minutes); a timeout setting that is too low causes silent function failures

- Handler — the entry point in the code package

- Code size and package type — zip vs. container image; a shift from zip to container image is a major change

- Layers — shared code libraries attached to a function; a layer version update affects every function that uses it

- Environment variables — configuration passed to functions at runtime; changes here are often undocumented

Trigger Relationships

A Lambda function has upstream triggers: the event sources that invoke it. Common triggers include:

- API Gateway — HTTP requests route through API Gateway to invoke Lambda

- S3 — object upload events trigger Lambda processing functions

- SQS — queue messages trigger Lambda consumers

- EventBridge — scheduled or event-driven invocations

- DynamoDB Streams — data change events trigger Lambda processors

Each trigger is a relationship in the CMDB. The Lambda CI is related to its trigger CIs upstream, and to its downstream dependencies (databases, other APIs, other Lambda functions) below it.

Versions and Aliases

Lambda supports versioned deployments. Each published version is immutable. Aliases (e.g., production, staging) point to specific versions. When a deployment moves the production alias from version 47 to version 48, that is a change event — the function behavior changed for all callers using the production alias.

AWS App Runner: Managed Containers in CMDB

AWS App Runner is a managed container service that runs containerized applications without requiring the user to manage clusters, nodes, or load balancers. It sits between Lambda (fully serverless) and ECS (container orchestration with more control). For Kubernetes-based container discovery — including EKS, ECS, and AKS — see our Kubernetes CMDB Discovery guide.

App Runner differs from ECS in a critical way: there are no clusters to manage, no task definitions to version, and no service scheduler to configure. You provide a container image or a source code repository, and App Runner builds, deploys, and scales the application automatically.

From a CMDB perspective, the App Runner Service is the primary CI:

App Runner Service Attributes

- Service name and ARN — stable identity

- Source configuration — container image URI or source repository URL; changes here represent deployments

- CPU and memory configuration — the allocated resources per instance

- Auto-scaling configuration — min/max instance count, concurrency setting

- Service URL — the HTTPS endpoint that the service exposes

- Health check configuration — affects service availability behavior

- Status — RUNNING, PAUSED, CREATE_FAILED

App Runner vs. ECS in the CMDB

The key distinction for CMDB modeling: App Runner services are simpler CIs than ECS services because they abstract away the cluster and task infrastructure. The CMDB does not need to capture node groups, task definitions, or capacity providers for App Runner. The service itself, its source image, and its scaling configuration are sufficient.

App Runner services typically sit behind custom domains (via App Runner Custom Domains) and serve web applications or APIs. CMDB relationships for App Runner services include:

- The container image repository (Amazon ECR) the service pulls from

- Any downstream databases or services the application connects to

- The AWS account and VPC connector (if the service connects to private resources)

AWS Batch: Infrastructure-as-Code That Still Needs Tracking

AWS Batch is a managed batch computing service that runs large-scale computational jobs — data processing, scientific simulations, financial modeling, ETL pipelines — without requiring the user to manage the underlying compute infrastructure.

Batch is frequently overlooked in CMDB conversations because it is not a web-facing service and does not run continuously. But Batch infrastructure is real, persistent, and subject to configuration drift:

Compute Environments as CIs

A Compute Environment is the foundation of AWS Batch. It defines the compute resources (EC2 instances or Fargate) that run jobs:

- Compute environment name and ARN

- Type — MANAGED vs. UNMANAGED; MANAGED means AWS handles instance provisioning

- Compute resources type — EC2, SPOT, FARGATE, or FARGATE_SPOT

- vCPU range (min/max/desired for EC2-backed environments)

- Instance types allowed in the environment

- Security groups and subnets — network configuration

- Status — VALID, INVALID, DELETING

A change to the compute environment — switching from on-demand EC2 to Spot, adjusting the vCPU range, modifying network security groups — is a configuration change with potential service impact.

Job Queues as CIs

Job queues sit between job submissions and compute environments:

- Queue name and ARN

- Priority — higher priority queues consume compute capacity first

- Compute environment associations — which compute environments the queue can use, in priority order

- State — ENABLED or DISABLED

Job Definitions as CIs

A job definition is the template for a Batch job — analogous to an ECS task definition:

- Job definition name and revision

- Container image and command

- vCPU and memory requirements

- Platform capabilities — EC2 vs. Fargate

Like ECS task definitions, job definition revisions are discrete change events. Tracking which revision runs in production matters for change management.

Why Serverless Assets Affect Change Management

The change management risk in serverless environments is real and underappreciated.

Consider a production incident: a payment processing application begins failing intermittently. The failure trace points to a timeout exception. Investigation reveals that a Lambda function handling payment validation has a 3-second timeout. A recent code deployment added a new validation step that, under load, takes 3.2 seconds. The function times out. Payments fail.

The root cause is a Lambda configuration attribute — the timeout setting — that was never updated when the code changed. Without the Lambda function as a CI in the CMDB, change management had no record that the function was part of the payment processing service. The change that caused the incident bypassed the change advisory process entirely.

Serverless assets create specific change management risks:

- Runtime deprecations. AWS deprecates Lambda runtimes on a published schedule. A function on a deprecated runtime receives no security patches and eventually stops accepting new deployments. Without CMDB tracking of runtime versions, these deprecation deadlines go unmanaged.

- Layer version updates. A Lambda layer update rolls out to all functions that reference the layer. A layer that contains shared business logic can break dozens of functions simultaneously if the update contains a defect.

- Environment variable changes. Lambda environment variables frequently contain configuration that should be in a change record: API endpoint URLs, feature flags, timeout values. These changes are trivial to make in the AWS console and trivial to forget to document.

- Concurrent execution limits. Account-level and function-level concurrency limits affect application performance under load. Changes to these limits are configuration changes with potential impact on dependent services.

For a foundational guide on building change processes around your CMDB data, see how to create and maintain a reliable CMDB.

Dependency Mapping: Lambda to Business Service

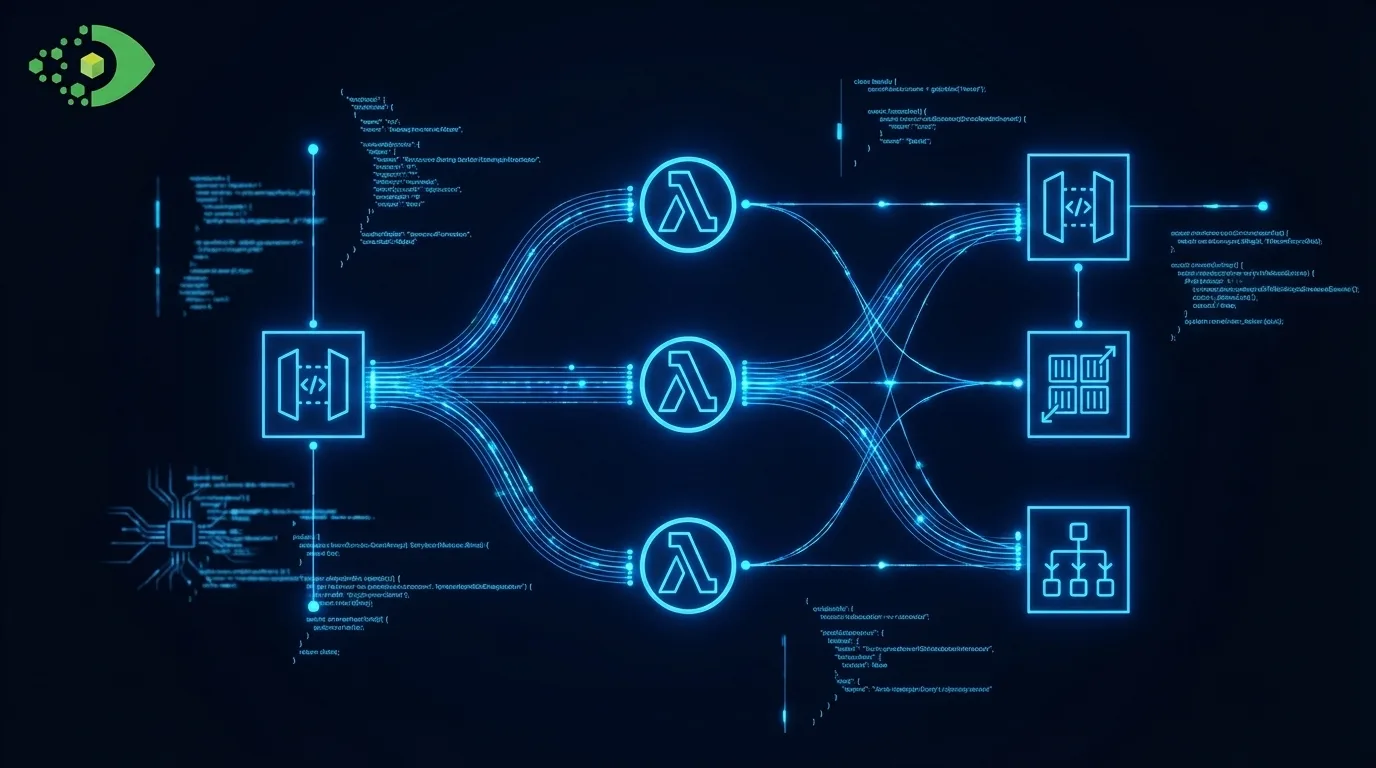

The highest-value output of serverless CMDB coverage is accurate dependency mapping. A Lambda function does not operate in isolation — it is a node in a service dependency graph.

A typical dependency chain for a serverless application:

Business Service: "Order Processing"

└── Application: "order-api"

└── API Gateway: "order-api-gateway"

└── Lambda Function: "process-order-handler"

├── DynamoDB Table: "orders"

├── SQS Queue: "order-fulfillment-queue"

│ └── Lambda Function: "fulfillment-dispatcher"

└── Lambda Function: "payment-validator"

└── External Payment API (third-party CI)

This dependency map in ViVID Service Mapping makes the impact of any Lambda function change immediately visible. If payment-validator has an incident, the map shows that “Order Processing” is the affected business service — without any manual investigation.

Building this map requires that Lambda discovery captures both the function CIs and their relationship data: trigger relationships (what invokes this function?) and dependency relationships (what does this function call?). Both require API-based discovery that goes beyond simple asset enumeration.

How Virima 6.1.1 Discovers Serverless Assets

Virima 6.1.1 extends IT Discovery to AWS Lambda functions, App Runner services, and Batch compute environments, job queues, and job definitions via the AWS API.

Discovered Lambda functions appear as CIs with their runtime, memory, timeout, and trigger relationships captured. App Runner services are discovered with their source configuration and scaling settings. Batch resources appear as structured CIs with parent-child relationships between compute environments, job queues, and job definitions.

All serverless CIs are available as nodes in ViVID Service Mapping, enabling IT operations teams to see how Lambda functions and Batch workloads connect to the business services they support.

For the Azure serverless and PaaS counterparts — including Azure Functions, Cosmos DB, and Key Vault — Virima 6.1.1 provides equivalent discovery coverage on the Microsoft cloud side.

For a complete breakdown of every cloud service Virima discovers, see the complete cloud discovery coverage guide.

Ready to Bring Serverless Into Your CMDB?

See how Virima 6.1.1 discovers AWS Lambda, App Runner, and Batch and maps them to your business services.

Schedule a Demo at Virima.com