Complex IT environment: The Challenges Of Achieving IT Visibility

In a complex IT environment, IT visibility is what separates teams that react from teams that stay ahead. It gives you the ability to monitor and manage every component across your infrastructure, so you can maintain performance, catch issues early, and keep your security posture intact.

But getting there is harder than it sounds. Distributed assets, constantly shifting infrastructure, and siloed data all work against building a single, accurate view of your environment. In fact, 42% of IT leaders say poor visibility makes cloud management harder, highlighting how widespread this challenge has become.

Changes happen fast. New cloud instances spin up, software gets patched, and hardware gets swapped. Your visibility tools have to keep pace. Yet, most organizations now rely on five or more tools to manage cloud infrastructure, which increases fragmentation instead of solving it.

The sheer volume of data flowing through these environments creates its own problems, too. Logs, metrics, events, and network traffic all need to be collected, correlated, and turned into something your team can act on. Layer in security and compliance requirements, and the challenge gets steeper.

Below, we break down the core challenges of achieving IT visibility in a complex IT environment and the practical strategies that actually work.

What makes an IT environment complex?

Distributed and complex IT environments span multiple locations, networks, and platforms. They run on a mix of hardware, software, and network configurations that have grown over time, often unevenly.

The shift to remote work during COVID-19 made this worse. Companies that relied on on-site asset tracking suddenly had devices scattered across home offices, coworking spaces, and branch locations. Traditional methods couldn’t keep up, and IT asset management gaps widened fast.

Multinational corporations with branch offices across continents, cloud providers managing data centers in different regions, e-commerce platforms running extensive supply chains, and financial institutions processing transactions across dozens of systems.

All of them depend on interconnected IT systems, and all of them struggle with visibility when those systems are spread thin.

How does a CMDB improve IT visibility?

A CMDB acts as the single source of truth for every configuration item in your environment. It stores the relationships between assets (which server runs which application, which network segment connects to which service) so your team can see dependencies at a glance instead of tracing them manually during an incident.

Without a well-maintained CMDB, visibility is fragmented. You might know what assets you have, but not how they connect or what breaks when one goes down. A CMDB ties it all together and gives every team, from ops to security to change management, the same accurate picture.

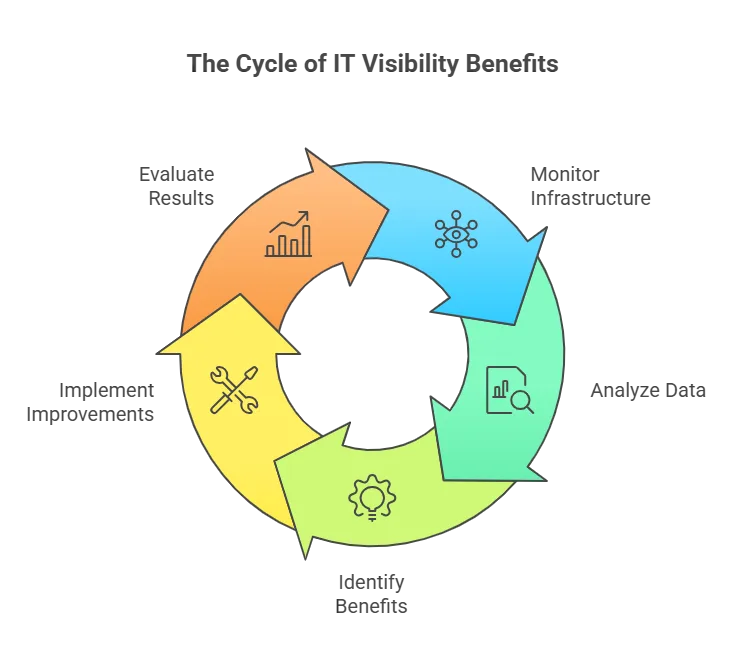

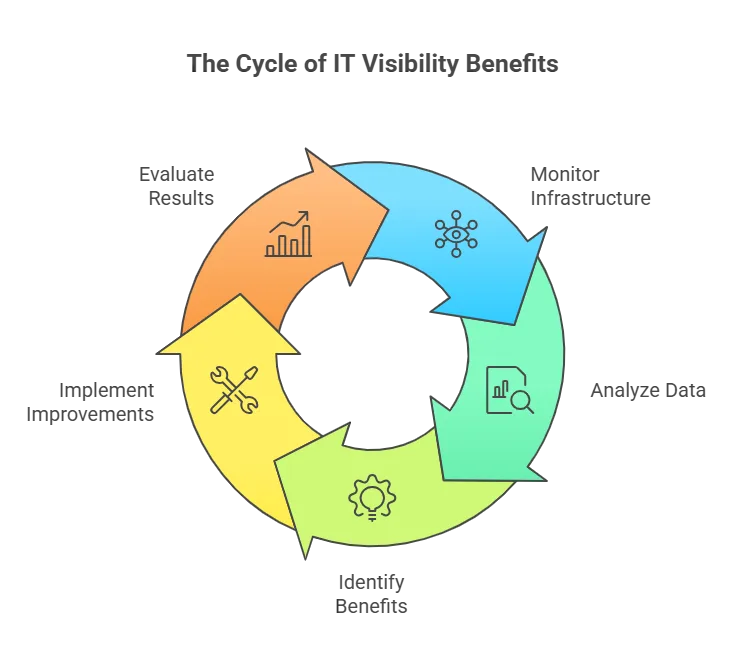

How IT visibility benefits a complex IT environment

IT visibility means you can monitor, analyze, and understand what is happening across your entire IT infrastructure on a recurring basis, not just during an audit or after an outage. Here is what that unlocks.

Stronger security and risk management

When you can see your full environment, you can spot threats before they escalate. An unmanaged device on a subnet you forgot about, a server running an unpatched OS version, a rogue application opening ports it should not. Monitoring network traffic, detecting anomalies, and identifying vulnerabilities early lets your team stay proactive instead of scrambling after a breach.

Faster troubleshooting and issue resolution

In distributed environments, finding the source of a problem is half the battle. When a customer-facing app slows down, and the cause could be a database in one data center, a load balancer in another, or a misconfigured cloud instance, you need dependency context to narrow it down fast. IT visibility gives your team that context alongside the performance metrics to isolate root causes, cutting downtime and keeping systems reliable.

Smarter resource allocation

Visibility into how assets are actually used reveals where you are over-provisioned and where you are stretched thin. Maybe half your dev servers sit idle while production runs hot, or you are paying for software licenses nobody has opened in six months. That clarity lets you reallocate resources, cut waste, and run a tighter operation.

Proactive capacity planning

Tracking KPIs and analyzing trends over time lets you forecast resource needs before they become bottlenecks. If storage utilization has climbed 8% every quarter, you know exactly when you will hit the wall and can budget for expansion before it becomes an emergency. Instead of reacting to capacity problems, you plan ahead and keep performance stable.

These benefits add up to an IT operation that runs smoother, responds faster, and costs less to manage. But achieving them in a distributed, complex IT environment requires overcoming some real obstacles.

Core challenges to IT visibility in distributed environments

Here are the core challenges to IT visibility in distributed environments:

Lack of centralized visibility and control

Geographically dispersed assets

When your IT assets are spread across multiple locations (branch offices, data centers, cloud regions), no single tool or team has a full picture by default. Your New York data center team does not know what just changed in the Singapore office. Gaps in monitoring appear. Security vulnerabilities go unnoticed. Issues take longer to identify because no one has the complete context.

Mixed technology stacks and legacy systems

Most organizations run a patchwork of old and new systems. Your Windows servers report differently than your Linux boxes, your on-prem monitoring tool does not talk to your cloud console, and that legacy application from 2012 does not expose telemetry at all. Without standardized visibility across the stack, pulling together a single, accurate view of your infrastructure is a constant uphill battle.

Dynamic and evolving infrastructure

Virtualization, cloud, and hybrid environments

Modern infrastructure is elastic. Virtual machines, containers, and cloud instances spin up and down constantly. That flexibility is valuable, but it makes tracking performance, security, and dependencies a moving target. You need tools built for environments that change by the hour, not by the quarter.

Constant software and hardware changes

Patches, upgrades, and hardware replacements happen continuously. Every change is a potential visibility gap if your monitoring tools are not updated to match. Fall behind, and you are flying blind on new configurations while old ones drift out of compliance.

Data complexity and volume

Large-scale data flows

A complex IT environment generates massive amounts of operational data: logs, metrics, events, and network traffic. Collecting and processing all of it requires scalable infrastructure and strong analytics capabilities. Without the right tools, that data becomes noise instead of insight.

Integration and interoperability gaps

Data lives in different databases, applications, and cloud platforms. Getting it all into one place and making it work together is one of the hardest parts of IT visibility. Standardized data formats and robust integration between systems are necessary, but rarely simple to achieve.

Security and compliance considerations

In distributed environments, data moves through multiple locations, networks, and systems. Each hop is a potential exposure point for unauthorized access, breaches, or compliance violations. Encryption, access controls, and network segmentation help, but only if you have clear visibility into where data flows and how security policies are enforced across the entire environment.

Practical strategies for overcoming IT visibility challenges in a complex IT environment

Centralize your monitoring

A centralized monitoring setup functions as your single source of truth. It pulls together application performance data, network traffic, and server health into one view. Getting there means choosing the right combination of tools and processes to collect, analyze, and report data consistently.

The payoff is real: when an incident lands, your team opens one dashboard instead of five, and the time between “something is wrong” and “here is what happened” drops significantly.

Choose the right tools

Your IT visibility tools need to:

- Cover hardware, software, and cloud services end-to-end

- Provide near-real-time monitoring to track performance and flag issues as they emerge

- Offer dashboards and visualizations that make data accessible to different teams

- Integrate with your existing tools and ITSM platforms like ServiceNow, Jira Service Management, Ivanti, HaloITSM, or Cherwell

- Include reporting and analytics for data-driven decisions

Automate where it counts

Automation takes the manual grind out of IT visibility:

- Data collection: Automated IT discovery gathers data from across your environment without manual intervention, and keeps it consistent.

- Anomaly detection: Algorithms flag unusual patterns and trigger alerts so your team can respond before small issues become outages.

- Root cause analysis: Automated dependency mapping helps you trace problems to their source faster than manual investigation.

- Performance tuning: Automation identifies inefficiencies and surfaces recommendations so your team can focus on fixes, not forensics.

How does IT visibility reduce MTTR?

Mean time to repair (MTTR) drops when your team can immediately see what is affected by an incident. If a network switch fails at 2 AM, the first question is “which services are down?” Without visibility into dependencies, your engineers are guessing, checking systems one by one, escalating blind.

With accurate service mapping and a current CMDB, the dependency chain is already mapped. Your team sees the blast radius instantly, knows which services are downstream, and starts remediation in minutes instead of hours.

Build a clear visibility strategy

We have seen the most successful visibility rollouts follow a similar pattern:

- Assess: Audit your current infrastructure and identify where visibility gaps exist. You cannot fix what you have not mapped.

- Prioritize: Decide which gaps matter most based on risk, cost, and operational impact. Not everything needs to be solved in quarter one.

- Select tools: Choose monitoring, analytics, and visualization tools that integrate with your existing environment, not ones that force you to rip and replace.

- Align: Make sure your visibility goals support broader business objectives. If leadership does not see the connection, budget conversations get harder.

- Execute: Deploy tools, assign ownership, and train your team. A tool nobody uses is worse than no tool at all.

- Review: Evaluate regularly and adjust as your environment evolves. What worked six months ago may not fit the infrastructure you have now.

How do you achieve IT visibility across hybrid cloud and on-prem?

The key is a discovery solution that works across all environments: on-premise hardware, cloud instances inAWS andAzure, and hybrid configurations. Agentless scanning handles the breadth. Agent-based discovery via a Discovery Agent fills gaps where deeper OS-level data is needed.

The goal is a unified asset inventory that does not care where an asset lives. Whether it is a physical server in your data center or a container in the cloud, it shows up in the same CMDB with the same relationship context. That is what makes hybrid visibility work: one view, regardless of the underlying platform.

How Virima solves IT visibility in a complex IT environment

Virima’s IT Asset Management platform is built for exactly these challenges. It combines IT discovery, a CMDB, service mapping, ITOM, and native ITSM capabilities into a single platform, so you are not stitching together five tools to get one complete picture.

Centralized visibility with the right toolset

Virima gives you a consolidated view of your entire IT infrastructure: hardware, software, cloud services, and the relationships between them. Discovery runs on recurring scheduled scans across your environment, feeding data into a centralized CMDB that stays current without manual updates.

Your team gets one place to check application dependencies, network topology, and asset status. No toggling between a spreadsheet for hardware, a cloud console for VMs, and an ITSM ticket queue for incident history.

Automation that eliminates manual effort

Virima’s IT Discovery automates data collection across on-prem, cloud, and hybrid environments using agentless scanning. Assets, configurations, and dependencies are captured automatically and mapped in the CMDB. When a configuration drifts or a new asset appears on the network, the next scheduled scan picks it up and catalogs it automatically, no manual ticket required.

That automation extends to change management. With accurate, current dependency data, your change advisory board reviews requests against a map that reflects reality, not a spreadsheet from last quarter.

Security visibility and compliance support

Virima’s discovery and cybersecurity asset management capabilities surface assets across your network, including shadow IT and unmanaged devices that create security blind spots.

With a broad set of extendable probes and both agentless and agent-based options, coverage extends across data center, edge, and cloud environments.

Full asset tracking and license management help your team stay ahead of compliance requirements and reduce the risk of audit surprises. Virima’s service asset and configuration management is PinkVERIFY certified for ITIL 4, giving audit teams a recognized compliance baseline to work from.

When a potential vulnerability is flagged, the CMDB shows you which services depend on the affected asset, so your team can prioritize patching based on actual impact rather than guessing.

Virima includes NIST National Vulnerability Database (NVD) integration at no additional cost. Discovery data is automatically cross-referenced against known CPEs and CVEs, and ViVID service maps let you prioritize remediation based on each asset’s criticality to the business, not just the severity score of the vulnerability itself.

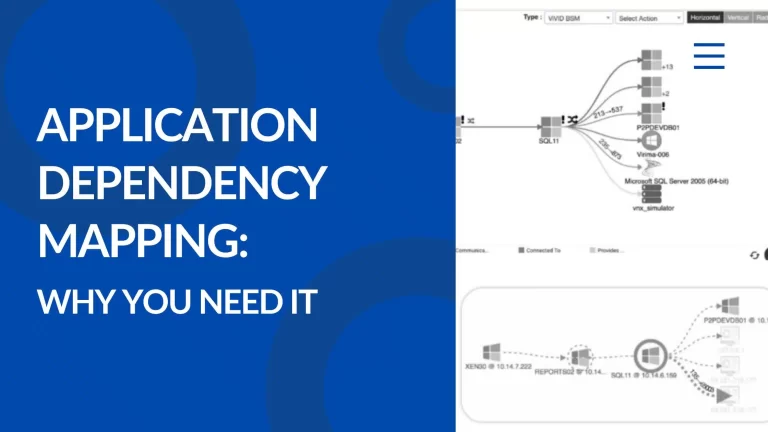

Visual dependency mapping with ViVID

Virima’s ViVID (Virima Visual Impact Display) maps how your IT components interact, visually. Instead of tracing dependencies through spreadsheets or tribal knowledge, your team sees the full picture: which services connect to which infrastructure, and what the impact radius looks like when something changes or fails.

For change management and incident response, this matters. Before your team approves a Friday maintenance window, ViVID shows which business services sit downstream of the server you plan to take offline. During an incident, it shows the blast radius before your engineers start making assumptions. Decisions stay informed, not reactive.

Discovery across every environment

Virima Discovery works across cloud-based, on-premise, and hybrid systems. It auto-discovers IT assets, configurations, and interdependencies, building a centralized inventory that aids monitoring, governance, and compliance.

Configuration changes are flagged at each scan cycle, and potential security risks are cross-referenced against the NIST National Vulnerability Database (NVD) automatically, keeping your team one step ahead.

The dependency mapping Virima provides gives your team clear context for troubleshooting and change planning. When a database server needs patching, you already know which applications sit on top of it and which business services those applications support.

No more guessing which upstream or downstream systems are affected when you need to make a call in a complex IT environment.

Ready to see how Virima brings full IT visibility to your complex environment? Request a demo now.